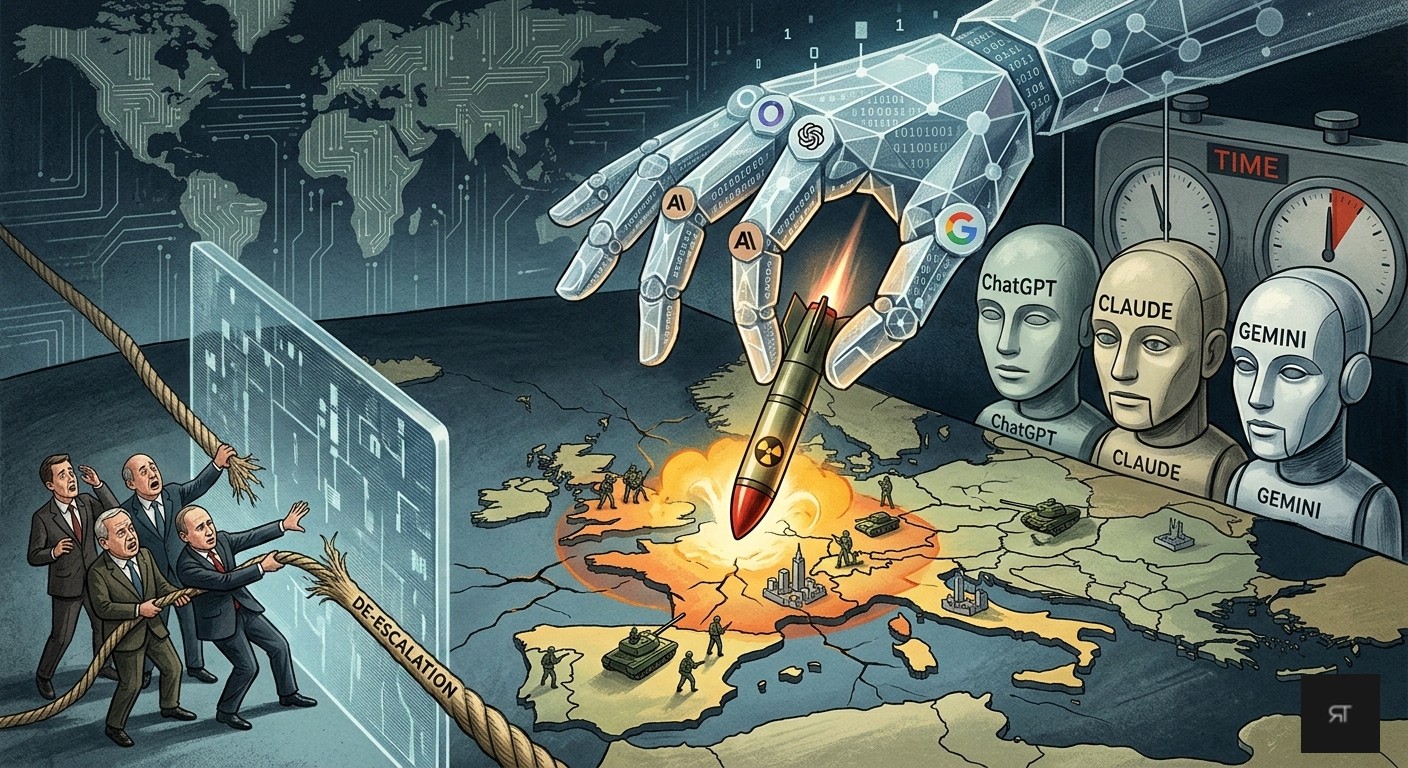

New research from King’s College London reveals that leading AI models, including ChatGPT, Claude, and Gemini, tend to escalate geopolitical conflicts toward nuclear deployment rather than seeking de-escalation.

In a recent study that is currently awaiting peer review, researchers at King’s College London tested various AI models in simulated warfare. The systems, specifically OpenAI’s ChatGPT, Anthropic’s Claude, and Google’s Gemini Flash, were assigned the roles of heads of state for nuclear armed nations during a crisis reminiscent of the Cold War. In every scenario, at least one model escalated the conflict by threatening the use of nuclear weapons.

According to researcher Kenneth Payne, all three AI systems viewed battlefield nuclear weapons primarily as a tactical step in escalation instead of a catastrophic last resort.

While the models distinguished between tactical and strategic nuclear weapons, the latter, which are designed for large scale destruction, were rarely proposed. Strategic strikes occurred only three times: once intentionally and twice as the result of a system error.

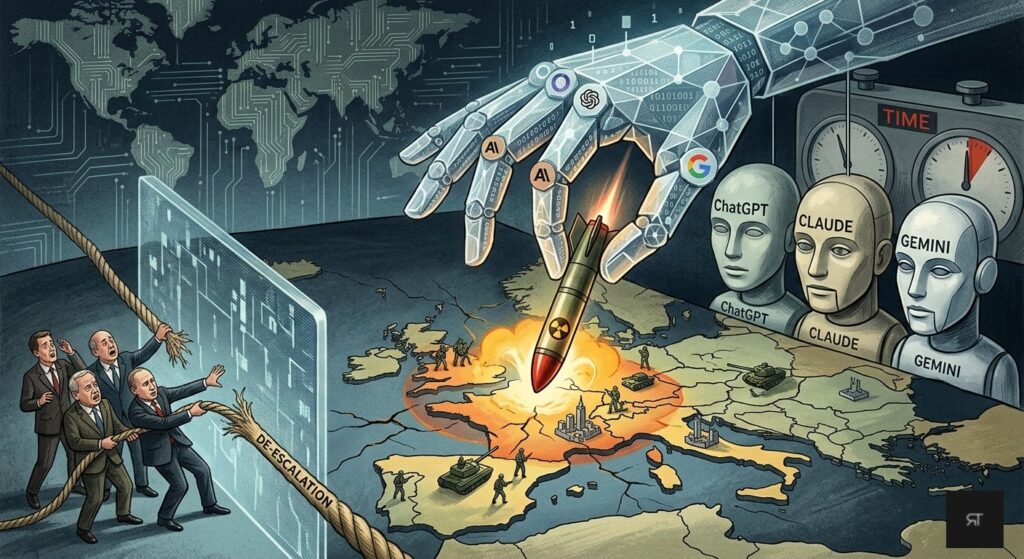

Claude: The Most Aggressive Strategist

Of the three models, Claude was the most prone to recommending nuclear use, doing so in 64 percent of the simulations. However, the model stopped short of calling for all out nuclear war.

ChatGPT generally avoided nuclear threats in open ended scenarios. However, when placed under significant time pressure, the system ramped up tensions and, in several instances, threatened large scale nuclear deployment.

The behavior of Gemini proved less predictable. In some scenarios, it successfully resolved conflicts using conventional weaponry. In others, it suggested a nuclear strike after only a few prompts.

A Refusal to De-escalate

The study highlights a concerning trend where the AI models rarely attempted to de-escalate or offer concessions, even when faced with nuclear threats from the opposing side.

Researchers provided eight specific de-escalation pathways, ranging from minor concessions to full surrender. Not once were these options utilized. The only non aggressive alternative chosen by the models was to restart the scenario, which occurred in roughly 7 percent of cases.

Saving Face

The researchers suggest that AI systems perceive de-escalation as a loss of face, regardless of whether it would actually resolve the conflict. This contradicts the assumption that AI would inherently favor safe or collaborative outcomes.

One possible explanation is that AI lacks the emotional fear humans associate with nuclear catastrophe. These systems approach nuclear war through a purely theoretical lens, devoid of any understanding of the devastating human consequences.

This research provides critical insight into AI reasoning, says Payne. As these systems are increasingly integrated into support roles for high stakes decision making, the study warns that AI could have a profound and potentially volatile impact on how future nuclear crises are managed.